Journal of Geo-information Science >

Accurate Extraction of Artificial Pit-pond Integrating Edge Features and Semantic Information

Received date: 2021-08-20

Revised date: 2021-09-30

Online published: 2022-06-25

Supported by

National Natural Science Foundation of China(42071316)

Fundamental Research Funds for the Central Universities(B200202008)

Open Foundation of Key Laboratory of National Geographic Census and Monitoring, Ministry of Natural Resources(2020NGCM03)

Chongqing agricultural industry digital map projec(21C00346)

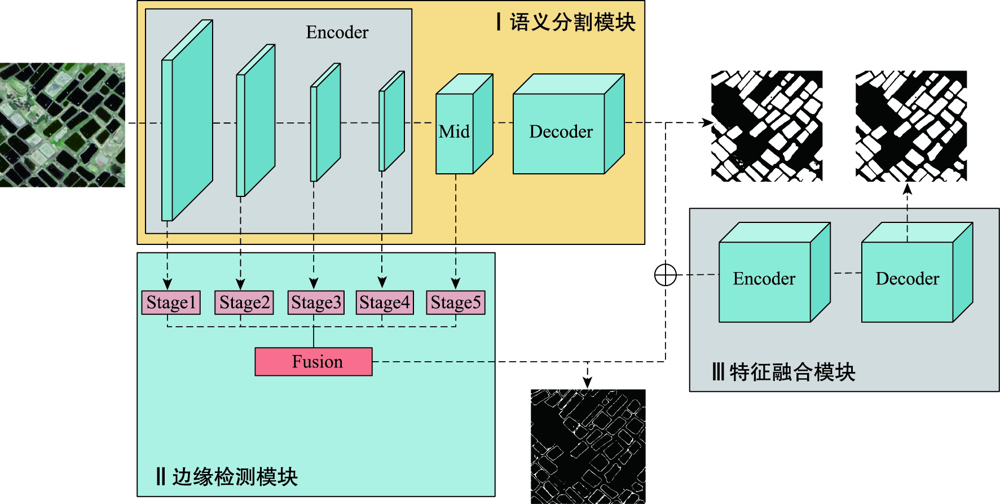

Copyright

High-resolution remote sensing images have more detailed spatial, geometric, and textural features, which provides useful visual description features such as spot position, shape, and texture, and reliable and abundant data sources for accurate extraction of spatial elements. However, traditional methods require the researchers to extract these features manually and have some limitation such as low positioning accuracy and rough edges. With the development of deep learning, it can extract typical elements such as water bodies, buildings, and roads from remote sensing images with higher accuracy and without the support of prior knowledge. The extracted element information can provide a data basis for innovative applications in urban and rural land resource actuarial calculation and planning, disaster risk assessment, and industrial output evaluation and estimation. However, traditional deep learning semantic segmentation methods focus more on the improvement of semantic segmentation accuracy in the extraction process of remote sensing elements and pay less attention to boundary accuracy. In view of the existing problems of deep learning methods in target extraction from high resolution remote sensing images, such as rough edge and much noise, a network model combined with edge and semantic features of targets was proposed to extract the artificial pit-pond. The improved U-Net semantic segmentation network was used to extract rich semantic information of targets in remote sensing images, which could be developed in edge structure and sub-network extraction, thus acquiring multi-scale edge features in remote sensing image. In this case, an encoding-decoding subnetwork combined with edge features and semantic information were applied to extract remote sensing image objects accurately. Meanwhile, the synchronous extraction of boundary information was also realized, and feature fusion and noise screening were realized through the encoding-decoding subnetwork. The proposed method was used to extract artificial pit-pond in a complicated background condition in Leizhou Peninsula. First, we designed labeled training and testing images for the experiment and performed data augmentation to increase the number of samples. Second, we provided a series of evaluation indicators for the extraction effect. Finally, we evaluated the performance of the model from multiple perspectives including semantic accuracy and boundary. Results show that the method proposed in this paper had the best performance in the evaluation, the F score and boundary F score reached 97.61% and 83.01%, respectively, which demonstrated the effectiveness of the fusion of high-level semantic information and low-level edge features in improving the accuracy of remote sensing target extraction.

YANG Xianzeng , ZHOU Ya'nan , ZHANG Xin , LI Rui , YANG Dan . Accurate Extraction of Artificial Pit-pond Integrating Edge Features and Semantic Information[J]. Journal of Geo-information Science, 2022 , 24(4) : 766 -779 . DOI: 10.12082/dqxxkx.2022.210489

表1 提取结果评价指标Tab. 1 Evaluation index for extraction result |

| 语义精度 | 边缘精度 | |

|---|---|---|

| 非松弛边界 | 松弛边界 | |

| 正确率Precision(P) | 边界正确率Boundary Precision (BP) | 松弛边界正确率Relax Boundary Precision (RBP) |

| 召回率Recall(R) | 边界召回率Boundary Recall (BR) | 松弛边界召回率Relax Boundary Recall (RBR) |

| F分数F-Score(F1) | 边界F分数Boundary F-Score (Fb) | 松弛边界F分数Relax Boundary F-Score (RFb) |

| 交并比Intersection over Union (IoU) | - | - |

表2 融合提取结果语义精度评价指标对比Tab. 2 Comparison of evaluation indexes of semantic accuracy of extraction results |

| 网络模型 | 精确度(P) | 召回率(R) | F分数(F1) | 交并比(IoU) |

|---|---|---|---|---|

| U-Net | 0.9501 | 0.9516 | 0.9507 | 0.9334 |

| DeepLabV3+ | 0.9632 | 0.9640 | 0.9635 | 0.9360 |

| D-LinkNet | 0.9725 | 0.9530 | 0.9626 | 0.9371 |

| ES-Net* | 0.9707 | 0.9652 | 0.9679 | 0.9379 |

| ES-Net | 0.9775 | 0.9748 | 0.9761 | 0.9534 |

注:最优值用红色加粗字体标出,次优值用蓝色加粗字体标出。 |

表3 提取结果边缘精度评价指标对比Tab. 3 Comparison of evaluation indexes of edge accuracy of extraction results |

| 网络模型 | 边界正确率 | 边界召回率 | 边界F分数 | |||

|---|---|---|---|---|---|---|

| BP | RBP | BR | RBR | Fb | RFb | |

| U-Net | 0.8040 | 0.8440 | 0.8077 | 0.8477 | 0.8058 | 0.8459 |

| DeepLabV3+ | 0.8079 | 0.8501 | 0.8011 | 0.8491 | 0.8044 | 0.8495 |

| D-LinkNet | 0.8071 | 0.8469 | 0.8051 | 0.8462 | 0.8061 | 0.8466 |

| ES-Net* | 0.8170 | 0.8552 | 0.7978 | 0.8377 | 0.8073 | 0.8464 |

| ES-Net | 0.8300 | 0.8646 | 0.8301 | 0.8649 | 0.8301 | 0.8647 |

注:最优值用红色加粗字体标出,次优值用蓝色加粗字体标出。 |

| [1] |

齐永菊, 裴亮, 雷济升. 基于GF-1的坑塘信息精确提取方法研究[J]. 测绘与空间地理信息, 2017, 40(3):145-148.

[

|

| [2] |

|

| [3] |

|

| [4] |

杜培军, 王欣, 蒙亚平, 等. 面向地理国情监测的变化检测与地表覆盖信息更新方法[J]. 地球信息科学学报, 2020, 22(4):857-866.

[

|

| [5] |

|

| [6] |

|

| [7] |

郭峰, 毛政元, 邹为彬, 等. 融合LiDAR数据与高分影像特征信息的建筑物提取方法[J]. 地球信息科学学报, 2020, 22(8):1654-1665.

[

|

| [8] |

曹云刚, 王志盼, 慎利, 等. 像元与对象特征融合的高分辨率遥感影像道路中心线提取[J]. 测绘学报, 2016, 45(10):1231-1240+1249

[

|

| [9] |

王猛, 张新长, 王家耀, 等. 结合随机森林面向对象的森林资源分类[J]. 测绘学报, 2020, 49(2):235-244.

[

|

| [10] |

陈生, 王宏, 沈占锋, 等. 面向对象的高分辨率遥感影像桥梁提取研究[J]. 中国图象图形学报, 2009, 14(4):585-590.

[

|

| [11] |

娄艺涵, 张力小, 潘骁骏, 等. 1984年以来8个时期杭州主城区西部湿地格局研究[J]. 湿地科学, 2021, 19(2):247-254.

[

|

| [12] |

张寅丹, 王苗苗, 陆海霞, 等. 基于监督与非监督分割评价方法提取高分辨率遥感影像特定目标地物的对比研究[J]. 地球信息科学学报, 2019, 21(9):1430-1443.

[

|

| [13] |

刘扬, 付征叶, 郑逢斌. 高分辨率遥感影像目标分类与识别研究进展[J]. 地球信息科学学报, 2015, 17(9):1080-1091.

[

|

| [14] |

|

| [15] |

|

| [16] |

|

| [17] |

刘浩, 骆剑承, 黄波, 等. 基于特征压缩激活SE-Net网络的建筑物提取[J]. 地球信息科学学报, 2019, 21(11):1779-1789.

[

|

| [18] |

|

| [19] |

|

| [20] |

|

| [21] |

|

| [22] |

李森, 彭玲, 胡媛, 等. 基于FD-RCF的高分辨率遥感影像耕地边缘检测[J]. 中国科学院大学学报, 2020, 37(4):483-489

[

|

| [23] |

|

| [24] |

|

| [25] |

|

| [26] |

刘巍, 吴志峰, 骆剑承 等. 深度学习支持下的丘陵山区耕地高分辨率遥感信息分区分层提取方法[J]. 测绘学报, 2021, 50(1):105-116.

[

|

| [27] |

|

| [28] |

|

| [29] |

|

| [30] |

|

| [31] |

|

| [32] |

|

| [33] |

|

/

| 〈 |

|

〉 |